SignalR can be downloaded from NuGet and is setup up as follows: (see the ASP. duration Amount of time allowed before Kibana cleans the scroll context during a CSV export. Public int BusinessEntityID ", level, sb.ToString())) .size Number of documents retrieved from Elasticsearch for each scroll iteration during a CSV export. The different attributes are added as required. After you download the crx file for ElasticSearch CSV Exporter 0.4, open Chromes extensions page (chrome://extensions/ or find by Chrome menu icon > More tools.

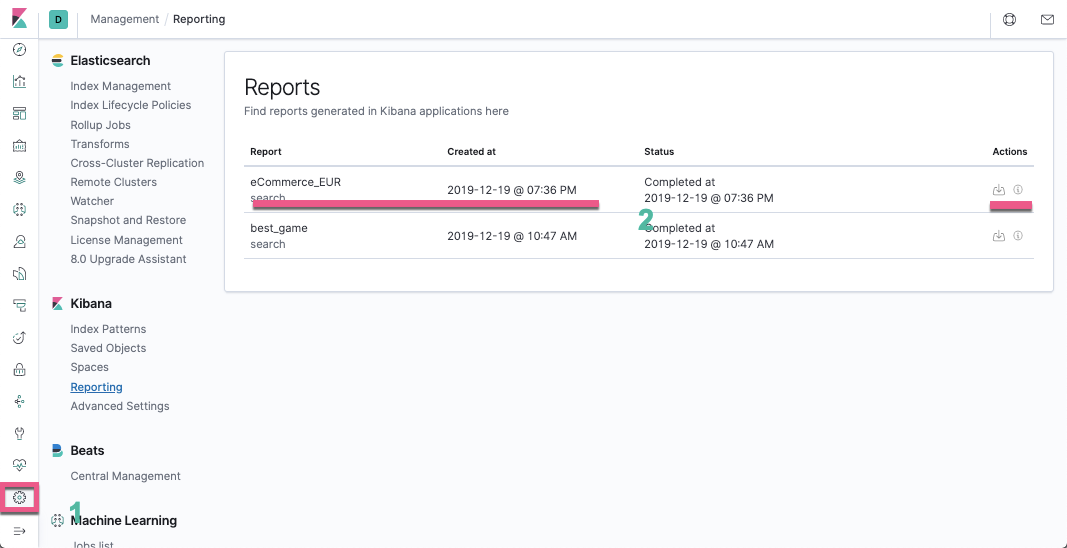

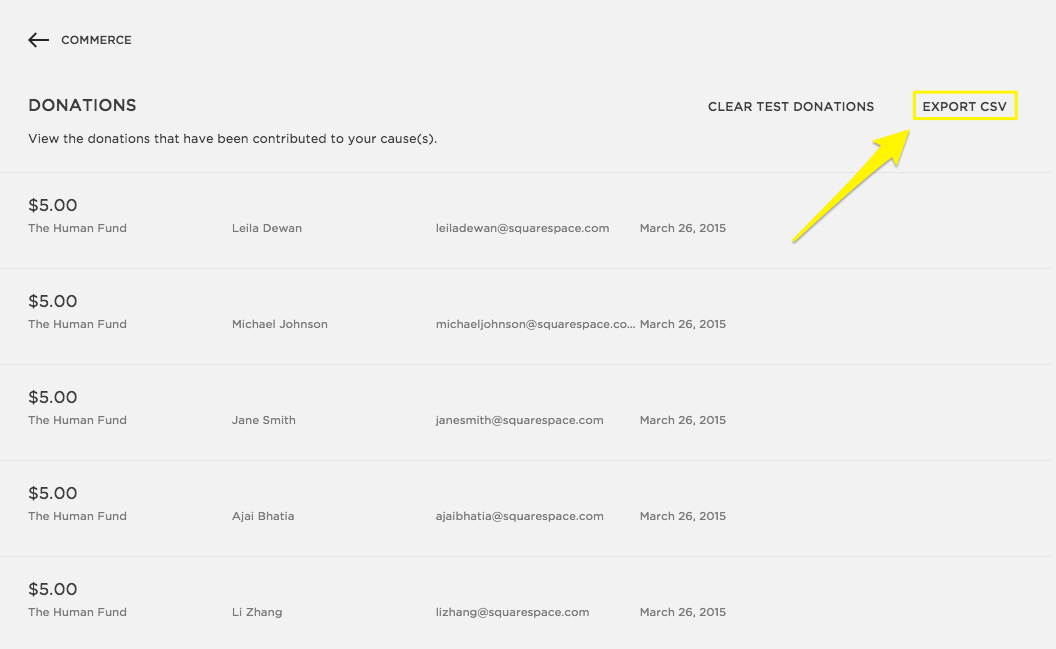

The Person class is used to retrieve the data from Elasticsearch and also export the data to the CSV file. Because the index has almost no data, about 20000 records, the export can be exported as a single CSV file or in a single chunk. This index persons_v2 is accessed using the alias persons. Then you save the search, go to Share > CSV Reports > Generate CSV in the top bar, wait for the report to be generated, go to Stack Management > Kibana > Reporting, hope that a previous version of. Elastic Stack enables us to easily analyze any data. The export uses the persons index created in the previous post. In this blog, I am going to explain how you can import publicly available CSV data into Elasticsearch. Part 19: Index Warmers with ElasticsearchCRUD Part 18: MVC searching with Elasticsearch Highlighting Part 17: Searching Multiple Indices and Types in Elasticsearch Part 16: Elasticsearch Aggregations With ElasticsearchCRUD Part 14: Search Queries and Filters with ElasticsearchCRUD Part 13: MVC google maps search using Elasticsearch Part 12: Using Elasticsearch German Analyzer Part 11: Elasticsearch Synonym Analyzer using ElasticsearchCRUD This tool can query bulk docs in multiple indices and get only selected fields, this reduces query execution time. Command line utility, written in Python, for querying Elasticsearch in Lucene query syntax or Query DSL syntax and exporting result as documents into a CSV file. Part 10: Elasticsearch Type mappings with ElasticsearchCRUD A CLI tool for exporting data from Elasticsearch into a CSV file. Part 9: Elasticsearch Parent, Child, Grandchild Documents and Routing Part 8: CSV export using Elasticsearch and Web API One approach I can think of is writing a cron job that reads the old indexes, writes them (in text format) to the s3 and deletes the indexes. I want to move old logs to S3 to save cost and still be able to read the logs (occasionally). Part 6: MVC application with Entity Framework and Elasticsearch I have an application pumping logs to an AWS OpenSearch (earlier Elasticsearch) cluster.

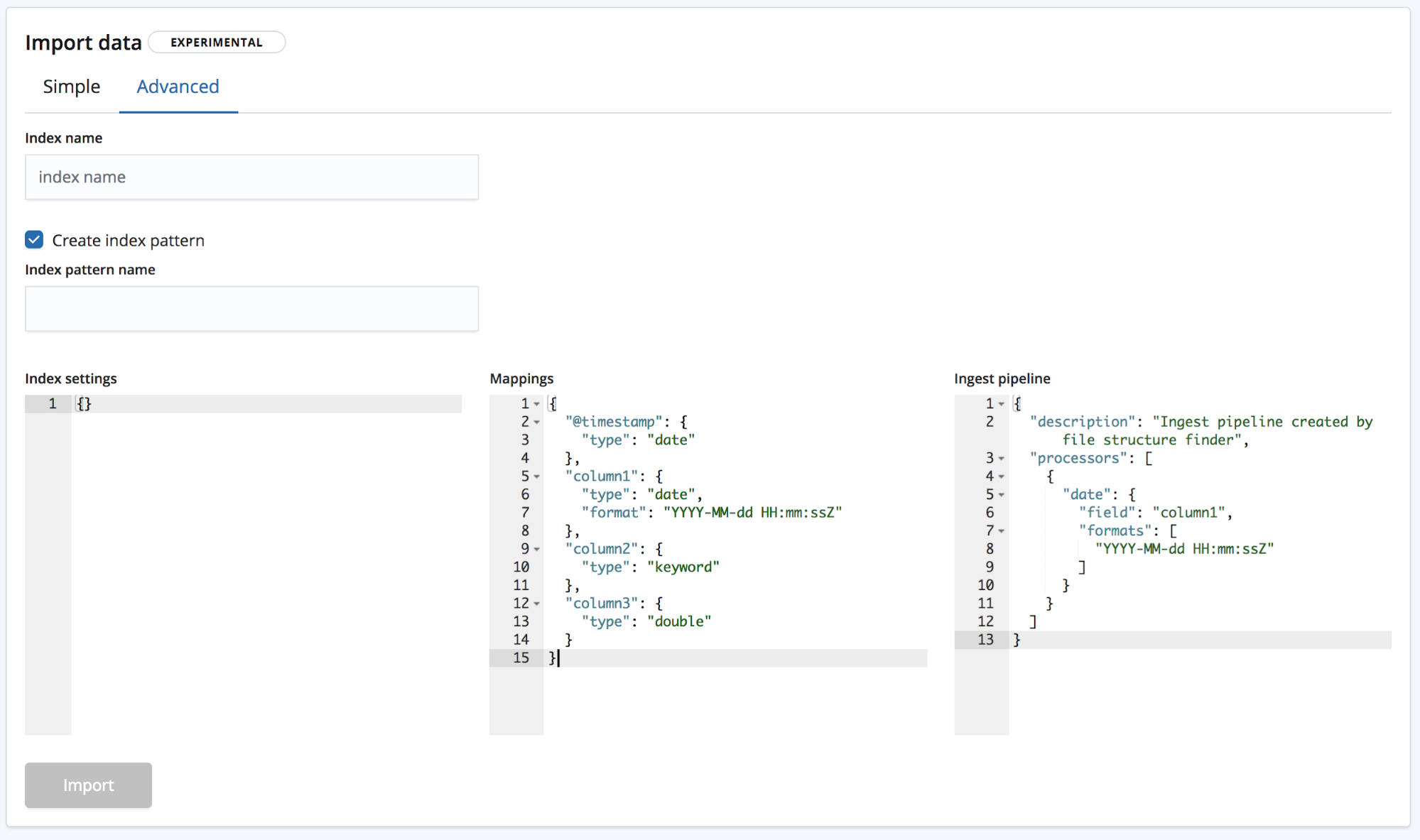

Part 5: MVC Elasticsearch with child, parent documents Part 4: Data Transfer from MS SQL Server using Entity Framework to Elasticsearch In fact, the Elastic stack does natively support this kind of export, through the Generate CSV function of the Kibana Discover section. Part 3: MVC Elasticsearch CRUD with nested documents Part 2: MVC application search with simple documents using autocomplete, jQuery and jTable That is why I try to export indices from AWS Elasticsearch Domain into CSV files. I saw elasticsearch input plugin in elastic site but I dont see about aws elasticsearch input plugin. I use AWS Elasticsearch instead of Elasticsearch. The progress of the export is displayed in a HTML page using SignalR (MVC razor view). Thanks Emma but AWS Elasticsearch doesnt provide x-pack feature so far. The data is then exported to a CSV file using from Jordan Gray. This API can retrieve data very fast without any sorting. The data is retrieved from Elasticsearch using _search with scan and scroll. If there is no available index, create an index by referring to the following sample code.This article demonstrates how to export data from Elasticsearch to a CSV file using Web API. If there is an available index in the cluster where you want to import data, skip this step.

(Optional) On the Console page, run the related command to create an index for the data to be stored and specify a custom mapping to define the data type: Locate the target cluster and click Access Kibana in the Operation column to log in to Kibana.Ĭlick Dev Tools in the navigation tree on the left. The following procedure illustrates how to use the POST command to import data. Importing Data Using Kibana ¶īefore importing data, ensure that you can use Kibana to access the cluster. You can import data in various formats, such as JSON and CSV, to Elasticsearch in CSS by using Kibana or APIs. Using Kibana or APIs to Import Data to Elasticsearch ¶

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed